About Us

Empowering Data-Driven Success with expertise in Data Engineering

We are certified Data Engineer from AWS, GCP and hold the certification of AWS Certified Data Engineer - Associate and Professional Data Engineer . We as a data lake / data warehouse company or data lake / data warehouse service provider in the data engineering field, We can help your organization to quickly fullfil the requirements of designing and implementing complete ETL/ELT pipeline by following the best industry practice in Data Engineering.

Is your company looking to transform OLTP data into an OLAP system or a centralized repository on cloud storage like AWS S3 or GCP GCS, also known as a Data Lake ? We’re the perfect partner for you. Our team of experts in data engineering can help you design and implement the ETL/ELT pipeline that can ingest the data into Data lake or Data warehouse as per your requirements and on your preferred cloud platform like AWS, GCP or Azure. Our simplied data driven approach help you to get the insights from your data within shortest possible time.

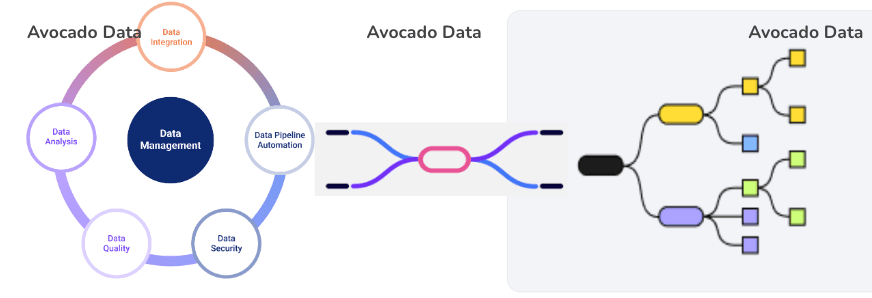

Our Expertise in Data Engineering:

As a specialized data engineer which specializes in managing the large scale Data Lake / data warehouse (AWS Redshift, GCP BigQuery, Azure Synapse Analytics), we are committed to delivering comprehensive solutions that not only unlock the potential of your data but also ensure its security and compliance. Our data governance and engineering expertise empowers organizations to make informed decisions while upholding the highest data protection standards.

Scalable Data Pipelines:

We design and build high-performance data pipelines using Apache Spark and Apache Flink frameworks, leveraging Scala or Python to efficiently handle large volumes of data, supporting both batch and stream processing.ETL/ELT Implementation:

We provide data extraction from structured, semi-structured and unstructured sources. We can load the data into data lake or data warehouse. We make the T part of ETL/ELT i.e transformation configurations through YAML files for each pipeline, which is allowing for actionable transformations on raw data. This method improves the overall usability of the data lake or data warehouse once the data ingested.Data Lake Optimization:

We optimize data lake performance and cost-effectiveness by fine-tuning the storage settings of Parquet files. This ensures that the files are neither too small nor too large—avoiding excessive costs associated with ingestion or data retrieval using Athena or other SQL-based interfaces. For more information, check out our technical blogs. To manage the CDC data we use the Apache Hudi, Apache Iceberg and Delta lake depending on the requirements and cloud platform.- Cloud-Native Technologies: We utilize cloud-native technologies and serverless architectures for enhanced scalability and cost-efficiency. For example, we use AWS Glue, AWS Serverless EMR cluster to run Spark/PySpark jobs to avoid the constant const of compute in AWS, similar GCP dataproc and dataflow, cloud run and cloud functions in GCP for Spark/ flink jobs, For data discovery and governance we use cloud native catalog like AWS Glue data catalog, Unity catalog, and GCP Dataplex data catalog, even across different clouds we can sync the data catalog using the open source tools like Apache Atlas. To orchestrate workflows, we combine AWS Step Functions, AWS Lambda, and AWS EventBridge to automate data ingestion from various sources. We are also specialized in the orachestration of workflows using the open source tools like Apache Airflow, Dagster, Prefect etc.

- Ingestion to Data Warehouse: We have highly configurable pipelines using the Apache Flank/Apache Spark in Scala/PySpark to read the data lake or other sources like structured, semi-structured and unstructured sources and ingestion in data warehouse like Redshift, BigQuery, Azure Synapse etc. Our ingestion pipeline is designed as a DIY(Do it yourself) and you to just provide the source, destination details and infra where the code will run like AWS EMR cluster, GCP dataflow/dataproc etc.

- Robust Data Management: We implement robust data governance frameworks to ensure data ownership, quality, and security. When connecting to the data catalog, we always use AWS Lake Formation to maintain data lake security and enable fine-grained access control, including row-level and column-level filtering. We integrate with centralized data catalogs to streamline data discovery and utilization. e.g. AWS Glue Data catalog , GCP Data Catalog and Unity Catalog. These data catalog provides centralized access control, auditing, lineage, and data discovery capabilities, replacing the legacy workspace-local Hive metastore model with an account-level metastore.

- Data Lineage and Impact Analysis: We provide tools and processes to track data lineage and assess the impact of changes in the existing infratructure like in databricks, AWS Glue pipeline etc. centralized data catalog like Unity Catalog, AWS Glue Data Catalog and GCP Data Catalog provides the data lineage and impact analysis capabilities.

By combining our data governance and data engineering expertise, we help organizations establish a solid foundation for data-driven initiatives while mitigating risks and ensuring compliance.

As we have various modular and parameterised codebase ready to be setup in your organization for data lake solution, we can help you to bootstrap your data lake solution in a matter of days.